extreme compression of real time video streams

what if we can compress video streams up to 300 times then transport it over the internet for genai models?

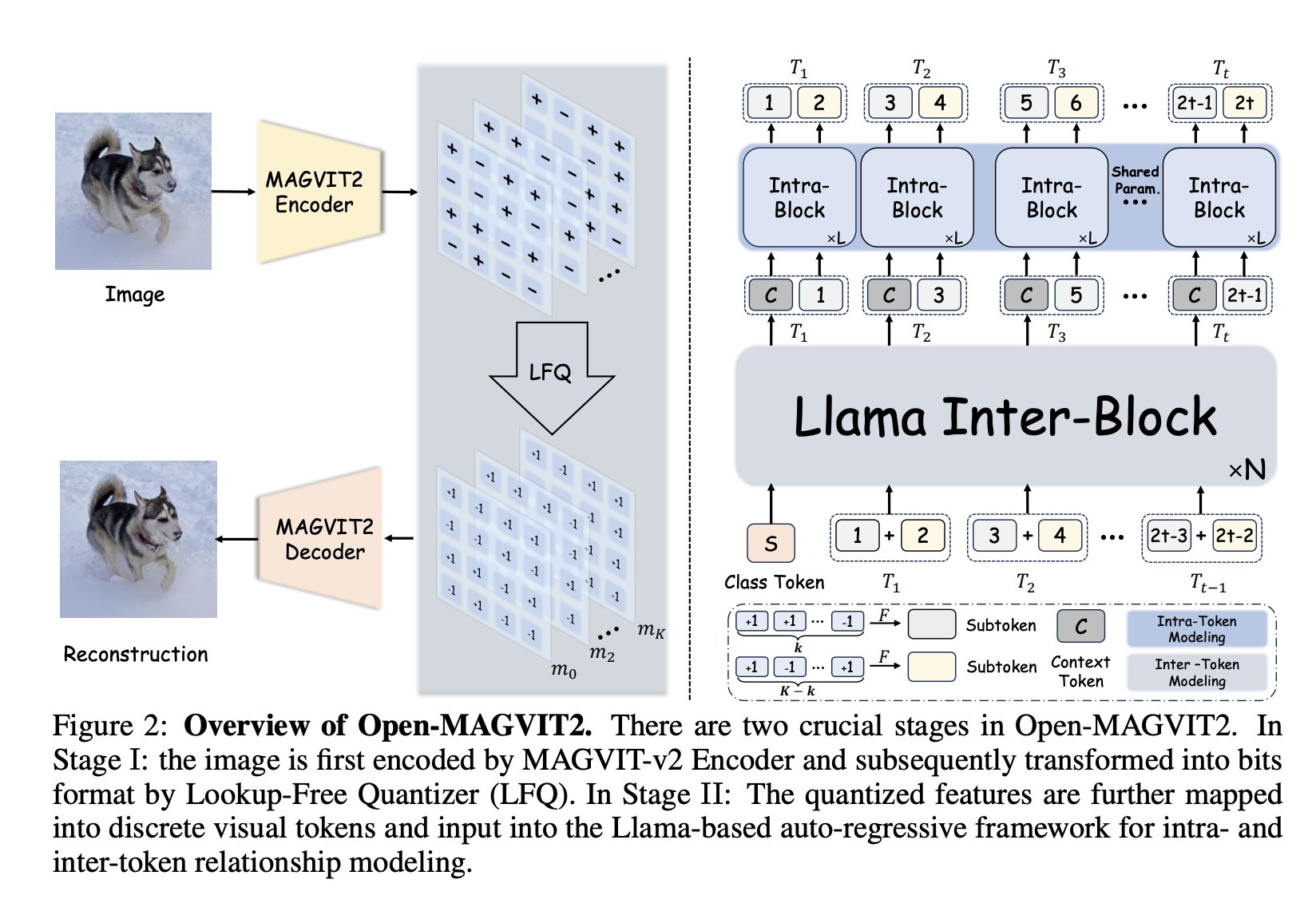

that’s exactly what i experimented this weekend with open-magvit-2 from tencent.

the premise

in the future, the main ingestor of video will be AI, and they don’t consume media like we do.

instead of consuming rgb values, they consume tokens.

in traditional video streaming pipeline for inference, you still need to encode video to h.264 on client then decode h.264 on server into a tensor.

so why not skip the whole pipeline up to the tokenization and eliminate h.264 to save bandwidth?

the simple math:

- a 128x128 image has 3 channels per pixel, each channel is 1 byte. this means 128x128x3 = 49,152 bytes

- magvit downsample this to 16x16 tokens where each token can be represented by 18 bits. this means 16x16x18/8 = 576 bytes

- 49512/576 = 85.958 compression

furthermore:

- magvit also does temporal downsampling, a 17 frame chunk goes down to 5 frame, so the compression rate goes to 85.958×17/5 = 292.25 compression

the test

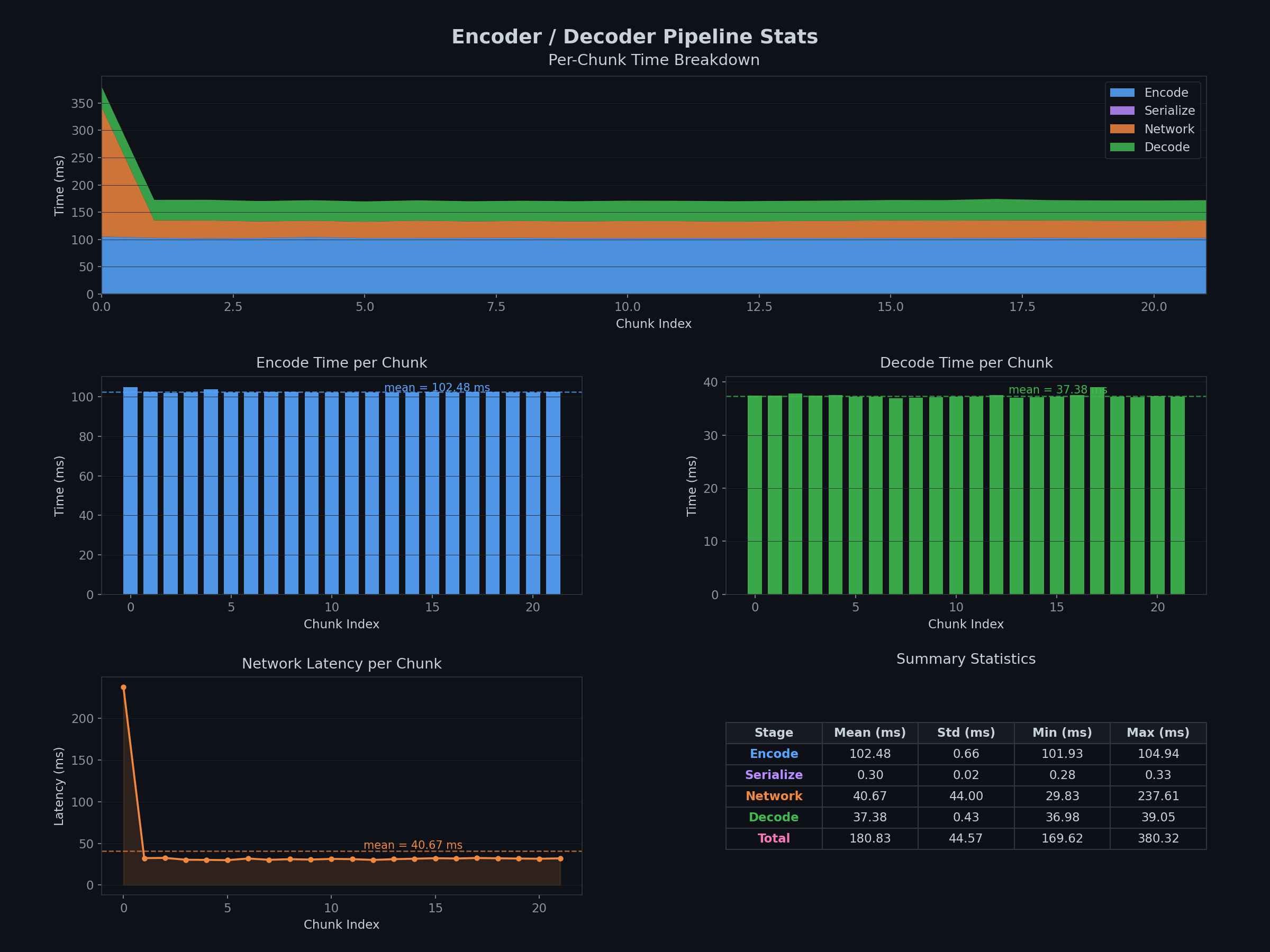

so i went with a simple test setup:

- uses livekit as transport layer

- client encodes video stream in 17 frame chunks then push tokens to a livekit room

- server then decode those 17 frame chunks then push video stream back to livekit room (so we can see a visual comparison)

the result

on m4 pro (for 0.5s chunk): encoding takes ~3s, decoding takes ~3s

on l40 (for 0.5s chunk): encoding takes ~0.1s, decoding takes ~0.04s

the learnings

1. bandwidth reduction is real, but buffering is the enemy

to process video, the model takes 17 frames per inference step. this gets the temporal attention and improves temporal consistency. however, it means that the decoder has a 17 frame delay to the real world since it has to buffer this data.

2. it's not worth it atm

since the codec is not optimized for no gpu, it's going to be slow on non-compatible devices. it's promising if there is multiple video feed since in this case the bandwidth constraint is more pressing.

the repo

you can find the repo here.